Background

Hiring platforms today are optimized for process, not for decision quality.

Most tools successfully move candidates across stages, but they fail to answer the core hiring question: Do we actually have enough reliable evidence to hire this person?

During my previous experience working on a hiring-adjacent system at Aptagrim, I observed a recurring pattern: recruiters relied heavily on fragmented signals like CVs, interviews, and gut feeling rather than structured proof.

As AI-generated applications and global hiring increased, this gap became even more critical.

The Challenge

Modern hiring faces three major structural problems:

- ATS workflows create administrative movement, not better decisions

- Interviews generate subjective opinions instead of comparable evidence

- AI-assisted applications make authenticity harder to interpret

- Cross-border hiring introduces language and context gaps

- German hiring environments demand auditability, seriousness, and defensible documentation

The result: Decisions are made with scattered signals and weak justification.

My Objective

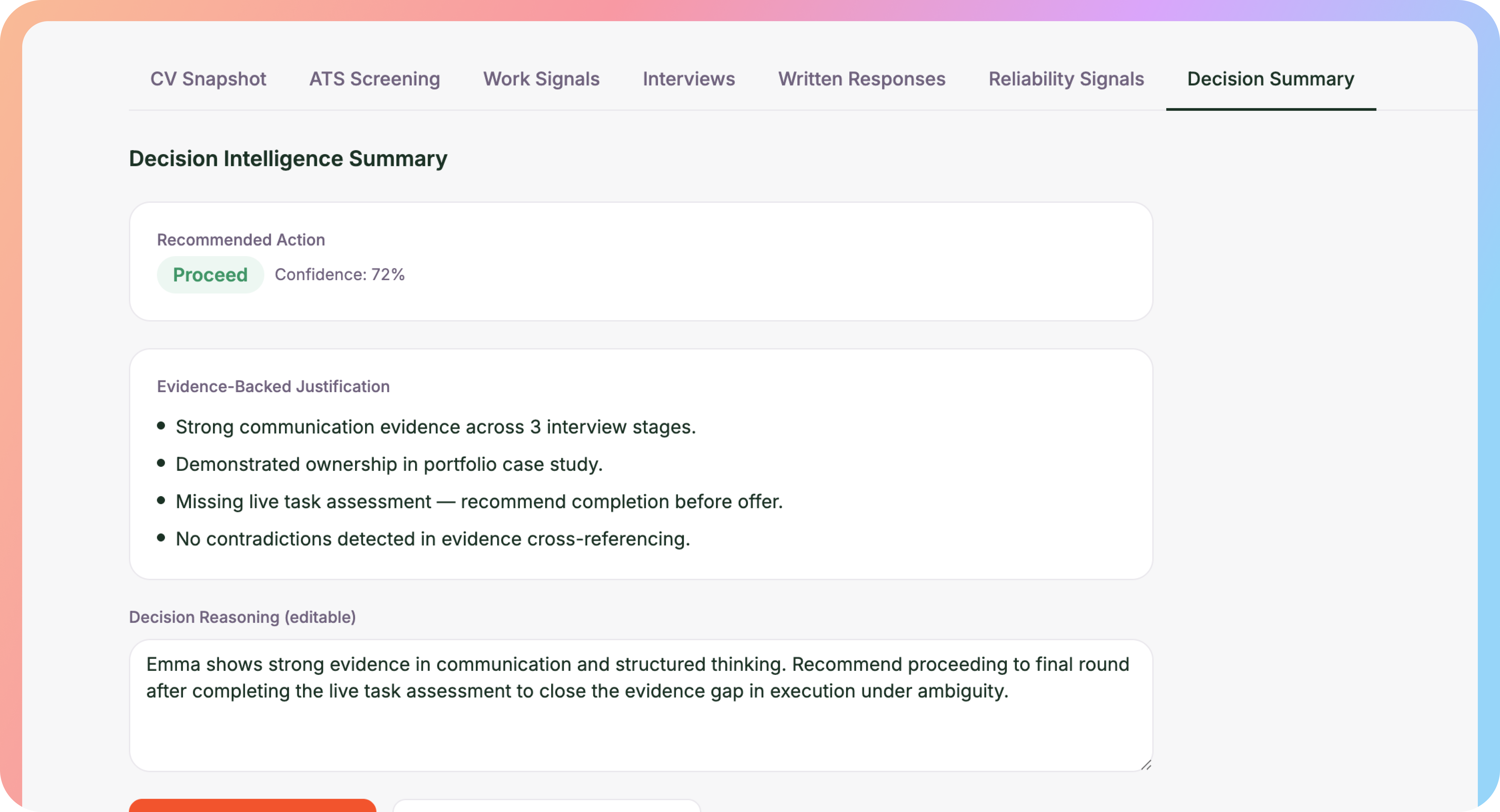

Design a next-generation hiring system that:

- Replaces traditional psychometric scoring with evidence-based evaluation

- Reduces bias without removing human judgment

- Supports bilingual hiring (DE/EN)

- Introduces structured decision intelligence across the pipeline

- Feels premium and modern, not like a generic ATS dashboard

The Core Insight

Traditional psychometrics often fail in real hiring contexts. Personality scores are hard to translate into job performance, create false certainty, and are difficult to defend in serious hiring environments.

Instead of scoring personality, keroHire scores evidence.

The Solution: The Evidence-Led Fit Model

A completely new evaluation framework based on three structured evidence streams rather than psychometric traits.

1. Work Signals

Role-specific micro-simulations designed to mirror real job scenarios. Examples: priority trade-off situations, stakeholder conflict responses, decision-making under ambiguity, execution planning tasks.

Output: Decision patterns, reasoning quality, and consistency, not personality labels.

2. Communication Signals

Derived from interviews and written responses, always anchored to real examples. Evaluated dimensions: clarity of thought, logical structure, reasoning depth, consistency across interactions, decision explanation quality.

This transforms interviews from subjective conversations into structured evidence.

3. Reliability Signals

A trust layer that highlights evidence gaps instead of forcing premature conclusions. Includes: evidence coverage gaps, contradictions between signals, incomplete assessments, AI-assisted content likelihood bands (LOW / MED / HIGH) with disclaimers.

Output focus: "What should we verify next?" instead of "final verdict".