Background

Smart assistants today are reactive tools. They respond when spoken to, but rarely understand context, emotion, or environmental nuance.

Homes are dynamic spaces. Noise levels shift. Lighting changes. Multiple users coexist. Yet most assistants treat every moment the same.

Ziggy was designed as a context-aware AI companion, one that adapts quietly to space, behavior, and presence rather than demanding attention.

The Challenge

Through research and workflow mapping, three friction patterns became clear:

- Assistants interrupt rather than blend into the environment

- Voice-only interaction limits accessibility and nuance

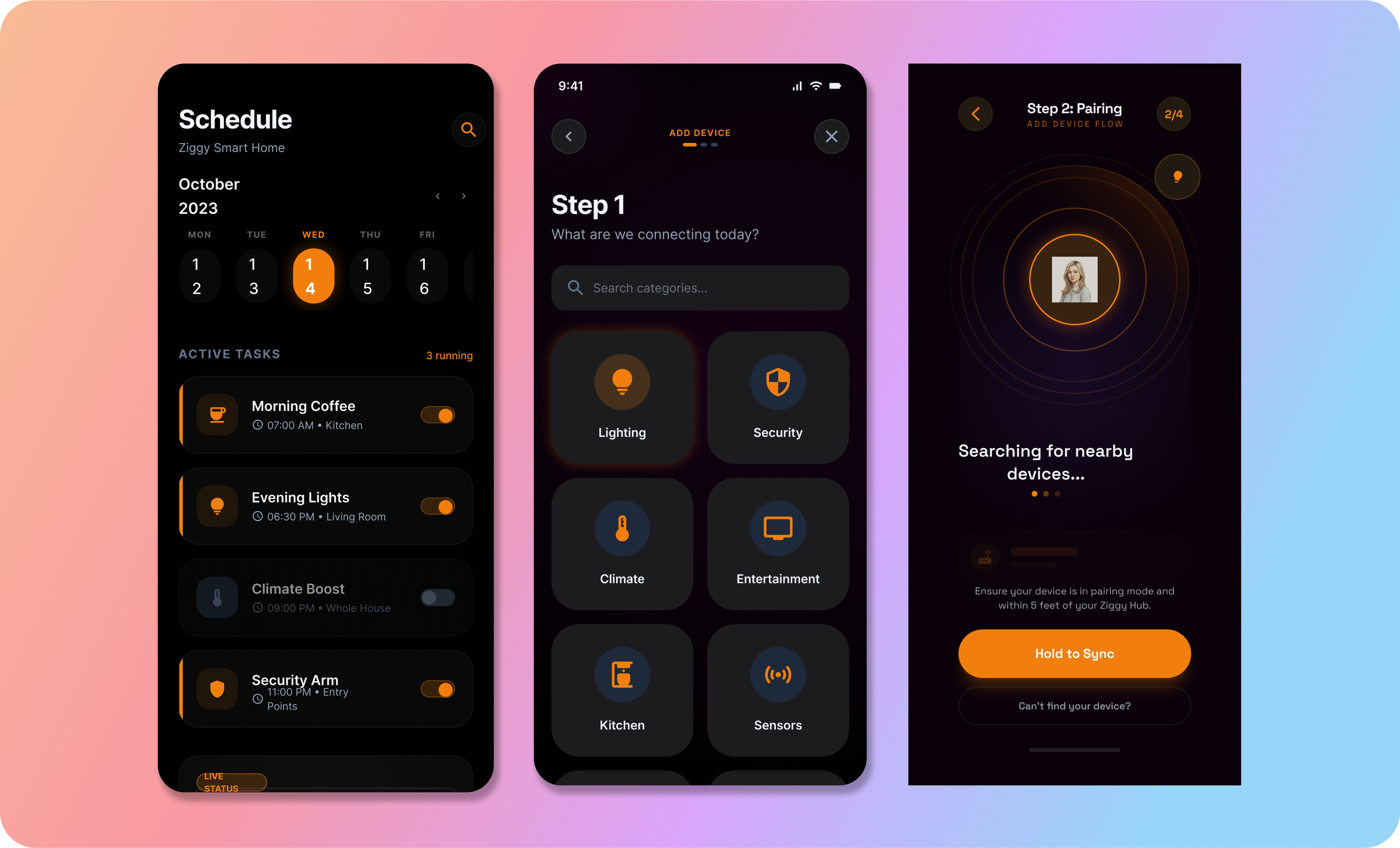

- Smart home control is fragmented across apps and devices

The deeper issue wasn't functionality. It was cognitive friction and emotional disconnect.

My Objective

To design a physically embodied AI assistant that:

- Understands spatial and behavioral context

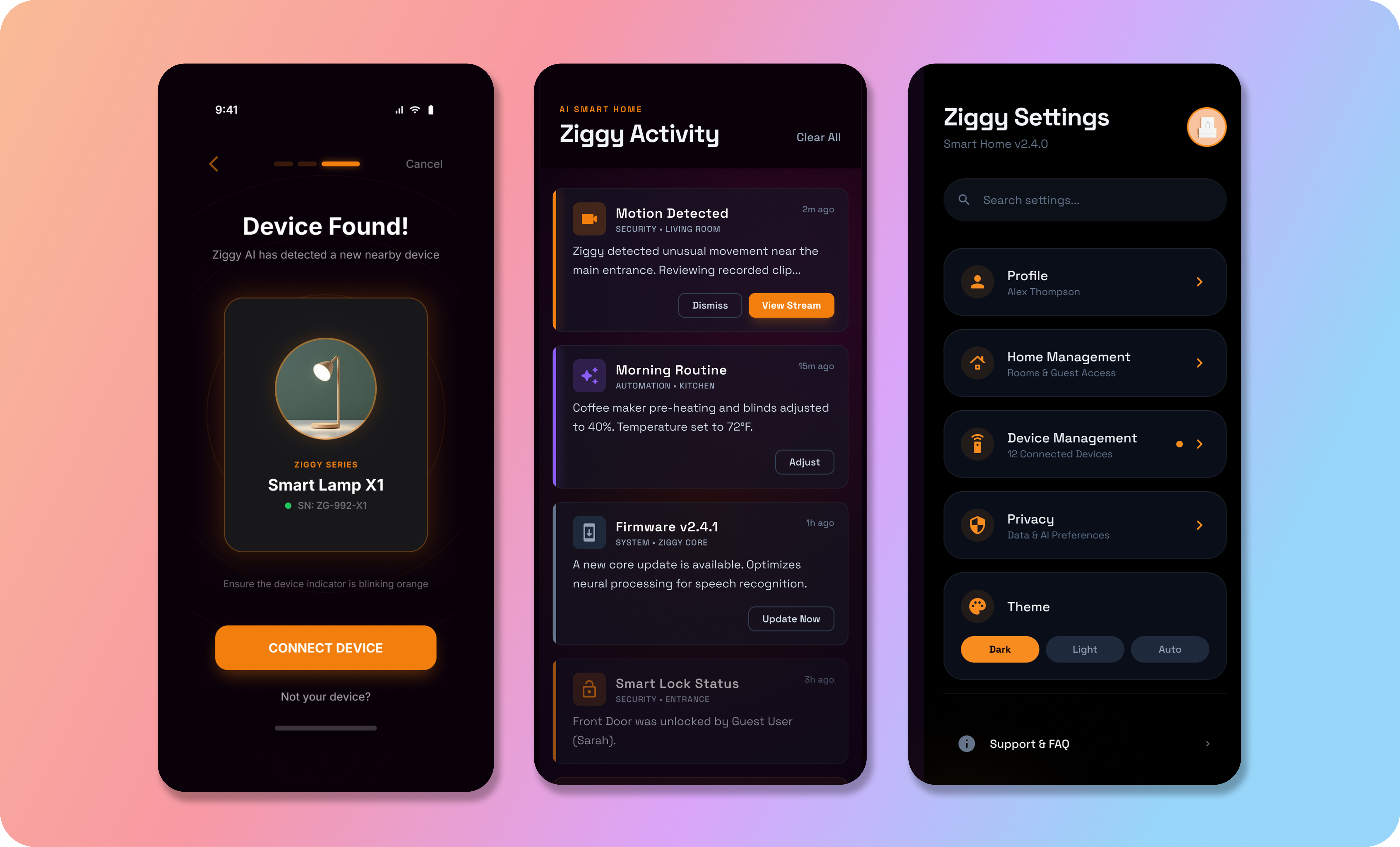

- Communicates visually, verbally, and ambiently

- Reduces command-based interaction

- Feels calm, trustworthy, and non-intrusive

The Solution

Ziggy transforms smart home interaction from command-based control to context-aware assistance.

It senses environment, behavior, and presence before responding, combining ambient light cues, subtle motion, and optional voice into one unified system.

Result: fewer interruptions, less friction, and a calmer, more intuitive home experience.